OpenAI has launched Trusted Contact for ChatGPT, which is able to enable customers to appoint a pal that the corporate can contact in the event that they’re vulnerable to harming themselves. An increasing number of folks have been utilizing ChatGPT as a digital therapist, counting on the chatbot for his or her psychological well being wants. OpenAI beforehand informed the BBC that greater than one million of its 800 million weekly customers specific suicidal ideas of their conversations.

Final yr, OpenAI confronted a wrongful loss of life lawsuit, accusing the corporate of enabling a young person’s suicide. The lawsuit alleged that {the teenager} talked to ChatGPT about 4 earlier makes an attempt to finish his life after which helped him plan his precise suicide. The BBC’s investigation printed in November 2025 discovered that in no less than one occasion, ChatGPT suggested the person on find out how to kill herself. OpenAI informed the information group that it had improved how its chatbot responds to folks in misery since then.

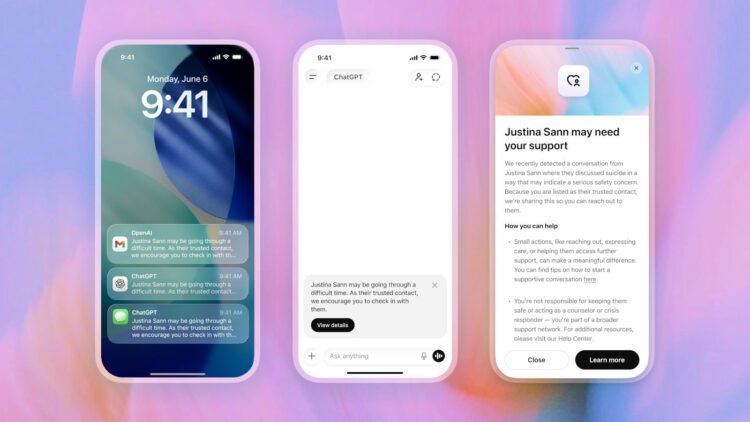

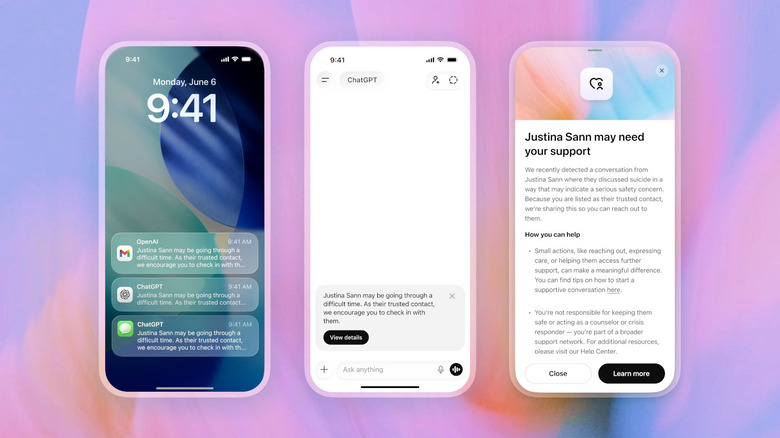

Trusted Contact builds off of ChatGPT’s parental controls, giving adults 18 and above the choice so as to add the main points of somebody who may assist them in case they’re on the verge of self-harming. Customers will be capable to nominate one grownup as their Trusted Contact in ChatGPT settings, who will then have to just accept the invitation they obtain inside one week. In the event that they fail to just accept it, the person can select so as to add one other contact as a substitute. ChatGPT’s system will first warn the person that the corporate could notify their contact if it detects a severe chance of them hurting themselves. It would encourage the person to achieve out to their pal and can even counsel potential dialog starters.

The method is not totally automated. OpenAI says a “small group of specifically educated folks” will assessment the scenario, and it is provided that they decide that there is a severe danger of self-harm that ChatGPT will ship the person’s contact an e mail, a textual content message or in-app notification.

“[The user] could also be going via a troublesome time,” the message will learn. “As their Trusted Contact, we encourage you to test in with them.” From there, the contact can view extra particulars concerning the warning, telling them that OpenAI has detected a dialog whereby the person has mentioned suicide. Nevertheless, the corporate won’t be sending them transcripts of the dialog for person privateness. “Whereas no system is ideal, and a notification to a Trusted Contact could not at all times replicate precisely what somebody is experiencing, each notification undergoes educated human assessment earlier than it’s despatched, and we attempt to assessment these security notifications in beneath one hour,” the corporate wrote in its announcement.

For those who or somebody you recognize is experiencing suicidal ideas, don’t hesitate to contact the Nationwide Suicide Prevention Lifeline at 1-800-273-8255. The road is open 24/7 and there is additionally on-line chat if a telephone is not accessible.